When I was in 9th grade at Renaissance High School, as an identified “gifted and talented kid” one of the classes that kept me up at night was my Introduction to Physical Science (IPS) with Mr. Ishakis. An excitable man with enough energy for science to power NASA, he would bound around our classroom, adjusting his yarmulke, expecting us to have answers for problems we had never even heard of before. I told my mom I was sure he wanted us to cure cancer at the age of 13. But I am so grateful for Mr. Ishakis all these years later. He was teaching us critical thinking.

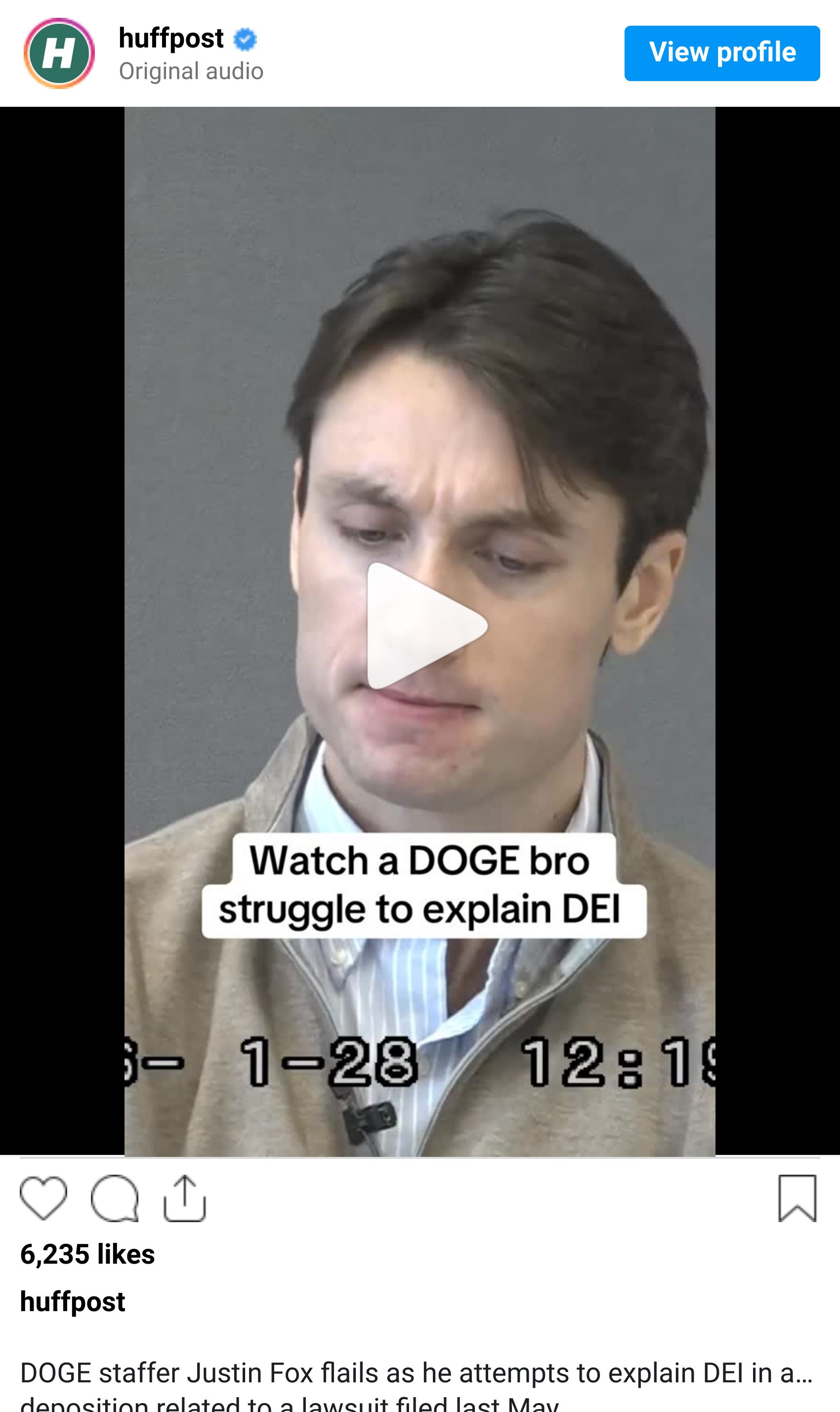

We can’t outsource our critical thinking or just make up definitions when it comes to people and their livelihoods. Some of you may have seen the testimony of DOGE staffer Justin Fox who is named along with DOGE in a lawsuit brought on by the American Historical Association after they used AI to cut millions of dollars in humanities grants with a very flawed definition of DEI or Diversity, Equity, and Inclusion by relying on the also flawed definition provided by the Executive Order under this current administration.

Watch the video here and apologies if it induces rage or severe annoyance:

According to the American Historical Association, “ DOGE fed grant descriptions into OpenAI’s ChatGPT generative artificial intelligence chatbot, asking it to decide if grants were “DEI.” They then entered ChatGPT’s responses into a spreadsheet compiling all NEH grants, including its “DEI rationale” and “Yes / No DEI?” replies. This ChatGPT-generated list was used in place of the list created by NEH staffers to identify which grants to cut. Projects Grants that were flagged as “DEI” and then terminated included a documentary sharing the story of Jewish women’s slave labor during the Holocaust; an archival project on the lives of Italian Americans; a project to digitize photograph collections of Appalachian residents; and multiple projects to preserve endangered Native American languages and cultures.” They go on to cite, “DOGE staffers violated the Federal Equal Protection Clause of the 5th Amendment by flagging grant descriptions as “DEI” solely because they included “BIPOC (Black, Indigenous, People of Color),” “homosexual,” “LGBTQ,” and “Tribal,” among other terms.”

This kind of formulaic arbitrary thinking is something many inclusion operators and strategists are familiar with when we encounter business leaders. They believe something isn’t necessary without having a clear understanding of what the thing actually means. And when asked to define the thing they think they are against or isn’t a priority - they either struggle with it or cite misinformation despite the efforts of many who were told to make the business case for DEI in the early 2020s. Some instances of that request, in my humble opinion, were lessons in wasting time because it didn’t matter if we did link it to business outcomes - without a fundamental understanding of why it’s important on a global human level, the work was never going to receive the priority it deserves. For many inclusion workers, this environment is only getting worse and this video is one of the reasons why.

But I want to also take us to the instance of using AI to determine the outcome of something so impactful on society.

Some of you may know I sell merch with my business motto: Feelings aren’t facts. I get some pushback on that because people think I mean feelings don’t matter. They do! But data helps us make decisions in the workplace and there are a lot of feelings that are wrapped in lived experiences that may or may not include prior unexamined biases and misinformation. Your gut is not unbiased. Not even years of experience can unravel unchecked biases. It’s constant work for everyone - including those of us who do the work.

One of my new favorite follows is Shae O. Deeply qualified to talk about technology and AI, I’ve been enjoying how Shae frames conversations about technology from a social impact and user perspective. Shae’s recent Instagram post really hit the nail on the head for me. In short, Shae explains we should be having conversations around baseline skill sets required in order to use AI effectively which is something I’ve been thinking about. As demonstrated above, AI was used as a decision maker after putting a series of ill informed short phrases in it instead of a tool to actually inform decision making. Shae argues baseline requirements should be:

Reading dense information carefully

Understanding nuance in complex arguments

Following multi-step reasoning

Evaluating whether a claim is accurate or nonsense

Shae says using AI is the easy part and thinking with and without AI is the hard part. “These are skills that almost nobody is investing in right now,” Shae’s post says.

If we outsource our critical thinking skills, especially when it comes to diversity, equity, and inclusion (which affects almost everyone since everyone has at least two social identities), then we are contributing to exclusion, disengagement, and distrust in the workplace. I for one don’t trust Justin Fox after seeing that video so imagine what it must have felt like to be in that workplace environment. I believe we can do better! One of my favorite people in the universe, Torin Ellis, has a product he just launched called Ngoma that addresses this very thing. Ngoma’s mission is to use accessibility‑aware, disabled expertise to improve technology. Check out Ngoma’s framework on their website if you are looking for a product like this or an example of how we can use AI for impact without outsourcing critical thinking.

In the end though, I feel like Mr. Ishakis would be proud of me for highlighting this needed redirection in technology and human-focused equity. We shouldn’t have to incur massive grant cuts that robbed us of so many projects that could share lived experiences across the spectrum to discover that we can’t take humans out of decision making.

There’s no need to let your talent problems continue. Recruiters are busy recruiting but we can solve your training challenges to ensure you are getting the best candidate assessments you need to make your business stronger.